10K to 20K in 83 Days: 5 Compounding Loops I Kept Running

Eighty-three days. X followers went from 10,000 to 20,000. That's roughly +120 per day, with no single day spiking 5,000 new followers. Peak weeks hit 900K views. Daily average hit 200K+. Even the slow periods held steady at 100K/day. On GitHub, 12 non-fork repos crossed 2,000 stars combined—top 3 landed at 896, 553, and 312.

But those numbers aren't the point of this article.

I get asked the same question constantly: "Why do you have so much AI content?" "How are you growing this fast?" I've asked myself the same thing. The honest answer: a few repeatable, small loops that compound. The more I run them, the smoother they get.

This isn't a post about "what I did." It's about what those loops look like, where they jam up, and how to get them spinning. If you want to build this kind of momentum, this is the blueprint.

Loop 1: Personal Tool → AI Packaging → Open Source → Content → Compounding

This was the strongest flywheel over these 83 days.

Four-step formula (feel free to copy it):

Step one: trigger. You have a real need. Or someone in an X reply or TG group asks, "Is there a tool for X?" Step two: you already have it. A rough version. Something you've been running for a couple months. Step three: packaging. Let Claude handle it—strip sensitive data, write a README, add LICENSE, add .gitignore, sketch a flowchart, make a bilingual version. One afternoon. Step four: share. Push to GitHub. Post about the specific pain point it solves. Expand in an article. Drop the link in the replies.

The critical step is actually step two—you already have it. If you write code from scratch to "make something open source," the loop runs backward. It won't compound.

Here are a few real examples, ranked by stars:

x-reader (896 ⭐) — I was scraping 7+ platforms daily for content summaries. Opening tabs one-by-one was slow. So I built a CLI that fetches and normalizes everything. Later, @yhslgg mentioned in replies he was using Firecrawl + Jina + Playwright stacked. I figured my approach was more direct, so I cleaned it up and open-sourced it. One post: 110K views.

https://x.com/runes_leo/status/2025363910056972411

claude-code-workflow (553 ⭐) — My Claude Code system (CLAUDE.md + memory + skills + hooks) took six months to stabilize. I kept seeing people ask, "How do you structure your CLAUDE.md?" I extracted the skeleton and open-sourced it as a template.

ai-health-vault (312 ⭐) — Built it for my parents: medical test archives + medication tracking, with Claude as a home health assistant backed by Obsidian. Completely off-topic from AI×Crypto, yet it opened up a non-technical audience segment.

The smaller ones: polymarket-toolkit (94), claude-video-kit (87), tg-reader-mcp (20), discord-cleanup (4), claude-skill-audit (2, just released 4/19), systematic-debugging-skill (1), humanizer-skill, content-pipeline-skill, runesleo profile.

None of these 12 repos were written "for open source." Each started as a script I was already using. I'd run it for weeks to verify it worked. Then I'd let Claude clean it up and ship it.

So the entry point to this loop isn't GitHub. It's the pile of scripts sitting on your machine right now. You've got a few rough scripts, a few prompts, a few skills you use every day. Pick one. Run through those four steps. Check the feedback. Decide if there's a second one worth shipping.

Loop 2: Bug → patterns.md → Recap Content

You write code. Bugs happen. You fix them. The pain lingers. By tomorrow, you've forgotten.

My current method: the moment I hit a 15+ minute debug wall, or I get fact-checked by Claude three+ times, or I stumble on something counterintuitive—I write one line in patterns.md. Just one line is fine. The key is writing it that day.

The file is now 2,100+ lines. I rarely re-read it. More importantly, Claude loads it automatically when we start a new session—so it can see every gotcha I've hit and avoid repeating them. Every Sunday, I pick the top 3 and turn them into individual posts. By month-end, they stack into long-form content.

Real examples:

- "Automating Polymarket strategy shutdown requires 5 steps, not 1." I nearly lost money learning this the hard way. Post got ~10K views: https://x.com/runes_leo/status/2043108584393777640

- "Codex review must get independent success criteria. Don't let it iterate on top of my conclusions." One backtest had ~7 silent bugs stacked into fake PnL. Fixed them all and it collapsed to near-zero.

- "Visual consistency needs enforced at hook layer, not AI willpower." After violating the same rule 3 times, I realized document policies aren't enough. You need machine-enforced gates.

Each bug has two uses: ① I don't hit it again, ② I turn it into content. If you don't write it down, you paid tuition with no receipt.

Open a patterns.md file (or whatever you call it—the name doesn't matter). Write in real-time. Don't wait for Friday. Next month you'll find your content pool suddenly has dozens of raw ingredients.

Loop 3: Skip Quantity. Win With 1-2 Viral Posts Per Day

The most counterintuitive part of the growth data: what matters is how many posts hit 25K+, not how many you post.

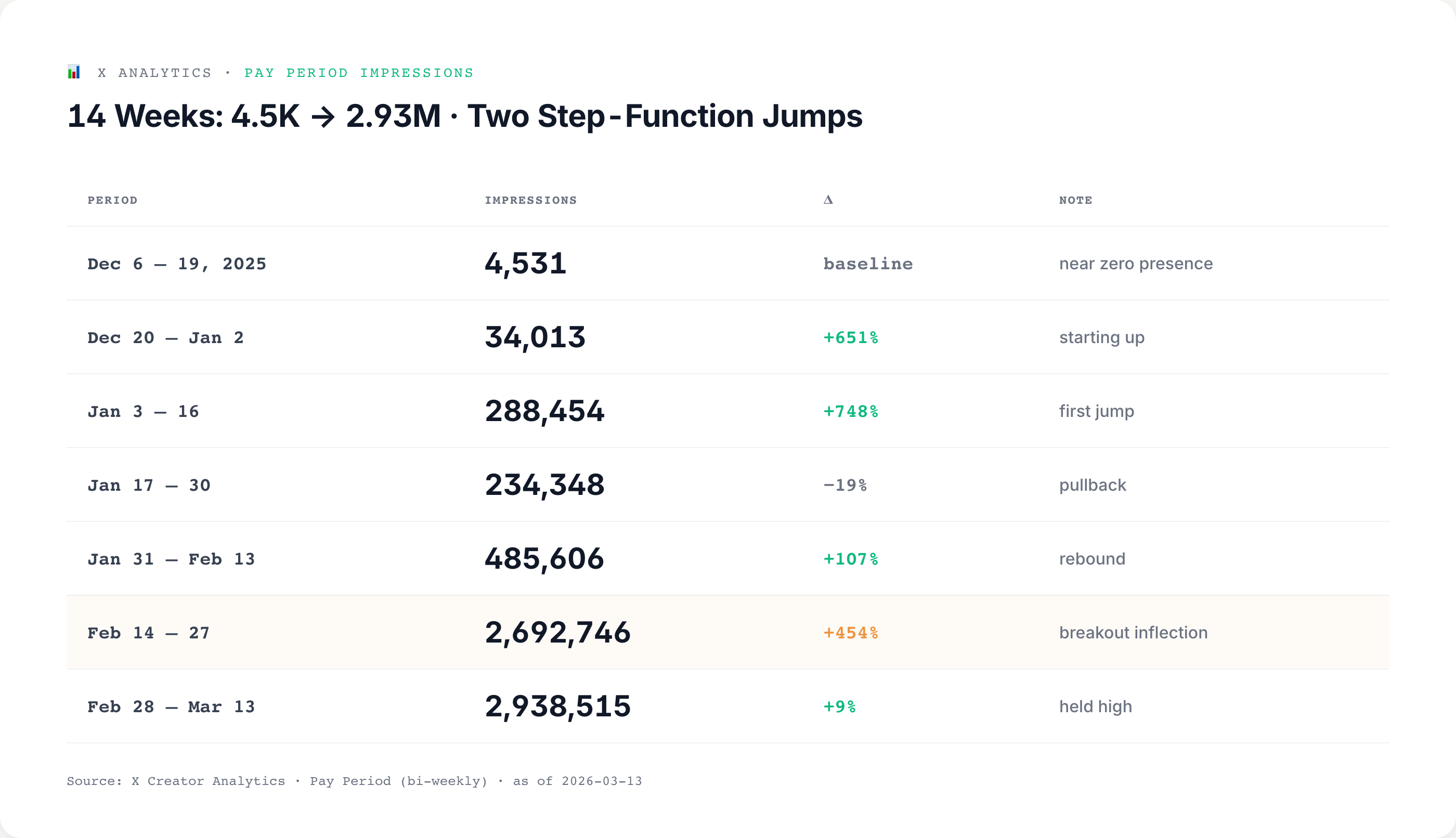

Data skeleton (measured in X analytics across 14 weeks):

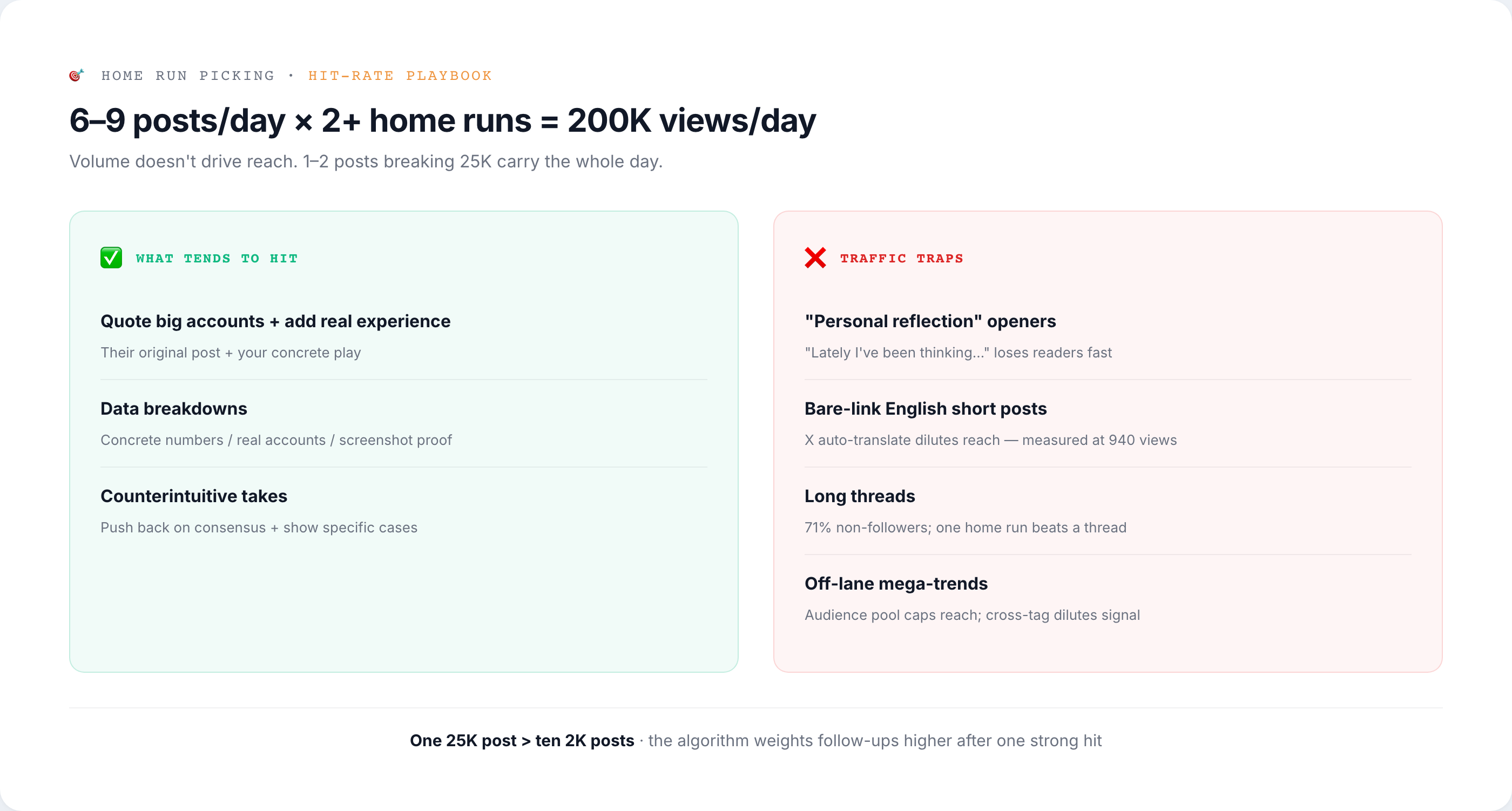

The formula is simple: 6-9 posts daily, but 2+ breaking 25K props up 200K impressions/day.

But those "2+ viral posts" follow specific rules. It's not luck:

One ops habit: I don't use hashtags, I don't write long threads, and I don't chase non-AI×Crypto mega-trends. For English, I do it—but only as long-form (X Article + bilingual on leolabs.me). No bare links in short posts.

Selection discipline beats "post more" by 100x. One 25K post per day beats ten 2K posts—not just by math, but because the algorithm weights subsequent posts higher when one post drives high engagement.

Loop 4: SSOT + Hooks Beat Willpower

The brain forgets. Rules get bent by habit. Setting rules then breaking them repeatedly—that's the default, not the exception.

My current system: extract all repeating decisions into SSOT files + enforce critical rules with hooks:

SSOT layer:

- High-engagement formula evolution (updated each Sunday after review—which categories pop, which ROI poorly)

- Topic pool (78 skeleton outlines, each with working title / core claim / target reader / visual notes)

- patterns.md (real-time bug logging, now 2,100+ lines)

- active-tasks.json (cross-session handoff panel for multi-model work—prevents tasks from vanishing)

Hook layer (PreToolUse gate—fires when Claude writes a file):

- Visual output requires reading design-system.md first + auto-run streak detection (3 same-color cards = warning)

- Sensitive data scan on posts (API keys, wallet privkeys, 0x addresses)

- Watermark enforcement on cards (mandatory signature snippet)

Why hooks? I wrote the same rules into behaviors.md three times. I violated them three times. Text rules lose to habit too easily. Machines don't. Hooks are hard gates, not soft promises.

The tooling above (SSOT + hooks) created: leo-style skill (4 modes + humanizer), article-pipeline (the pipeline this article ran through), xhs-publish (WeChat Moments repurposing), distribute (one-click English + bilingual site).

Write rules in docs and they rot. Write them into hooks and they hold. This scales to any high-frequency repeating decision—config standards, code review checklists, ship checklists, commit formats. All are stronger baked into process than "team agreement."

Loop 5: Build → Self-Quote → Narrative Compounding

Every new post is a relay of old narrative.

Here's how: when you post something new, scroll back. Find an old post, old article, old repo that connects. Quote it in. One new post + one old post = one complete narrative arc.

Three proven compounding chains:

Milestone quotes are the simplest. January 26: "Hit 10K followers." April 19 (83 days later): "20K." I quoted the 10K post directly. Same voice, same milestone, two moments in time—the time gap itself tells the story. The account is growing.

https://x.com/runes_leo/status/2015640760323018880

https://x.com/runes_leo/status/2045889659293622312

The second: problem → solution in 24 hours. April 18 I audited my Claude Code setup. Found 61 skills, 1,388 calls in 11 days, but 6 critical rules written into my harness got 0 invocations—"rules are comments, code is harness." Post hit 27K views. People asked: can you share the audit tool? April 19 I cleaned up the scanning script, open-sourced it as skill-audit, and quoted the April 18 post. Problem post + solution post, back-to-back. Quote connects the arc.

https://x.com/runes_leo/status/2045645299088060776

https://x.com/runes_leo/status/2045856692110323899

Third: lesson learned → methodology article. April 12 I posted: "AI code review has blind spots too." I described reviewing my own Claude code with Codex, it said ✅ reviewed, then I ran a second independent Codex review—first pass caught 3 blockers, second pass caught 2 more. Five bugs the first review missed. That post was a single data point. Two days later, April 14, I expanded it: one point + 23 prior similar lessons into a 14K-word paid X Article (30K views). One lesson becomes systematic methodology. Density jumps geometrically.

https://x.com/runes_leo/status/2043470971898634599

https://x.com/runes_leo/status/2044009023713022094

This loop has a higher bar than the first four—you need months of "back catalog" to begin. So don't think about it for the first three months. Get loops 1-4 spinning first. By month four, when you look back at your history, you'll suddenly spot all the narrative threads you can weave together.

The Close: Don't Copy Me. Apply These Loops to Your Own Lane

Four of these loops you can copy directly:

✅ Loop 1 (own tool → AI package → open source → content)

✅ Loop 2 (real-time bug logging in a patterns file)

✅ Loop 3 (selection discipline over volume)

✅ Loop 4 (SSOT + hooks)

Loop 5 requires months of back catalog. It's not a day-one play.

But three things I have—resources and timing—you don't need to replicate. Find your own path:

My lane is AI × Crypto. Yours could be anything else. Web3, NFTs, data visualization, a vertical SaaS, design tools, indie games, education products. The key: you're actually building there. Writing real code. Serving real customers. Shipping real products. Hitting real walls. Reading news and summarizing it gets views, but it doesn't close a loop.

I have dedicated time to build independently. You might be running this in your nights, weekends, or spare moments. That's fine. Loops can be slower. They can't leak. Running one complete cycle per week beats running incomplete cycles every day.

I have real money trading to source real data. You have real customers, real users, real projects with real data. Any number you've lived through beats any number you've invented.

Back to the question everyone asks—"Why so much AI content?"—the answer lives in loops 1 and 2: I'm writing code every day. I'm tuning strategies. I'm hitting walls. Content is the byproduct, never the plan. When your work itself is the ore, you never run out of material.

Your next move takes three things: pick one narrow lane you're actually in; run one complete minimum loop (build a tool you use → post about it → write one entry to your patterns file); check the data in 21 days.

One final hook—what's the tool you're using right now that you haven't open-sourced yet? Drop a link in the replies. I'll give you feedback on how to package it.

About the author: Leo (@runes_leo), AI × Crypto indie builder. Doing quantitative trading on Polymarket, building data analysis and automated trading systems with Claude Code and Codex.

leolabs.me — articles · community · open-source tools · indie projects · all platforms

X Subscription — paid weekly updates, or buy me a coffee ☕

Learn in public, Build in public.